- Blog

- What do medal of honor recipients receive

- Back to the future part iii mpaa rating 1990

- Organize mods folder sims 3

- What is the book of daniel

- Killer instinct ps4 sexy

- Harry potter deathly hallows part 2 free full movie

- Globalprotect apk

- Acrobat x1 pro trial

- Magoosh gre videos 2017 google drive link

- Watch undateable season 1 episode 1 online free

- Doctor who specials dvd

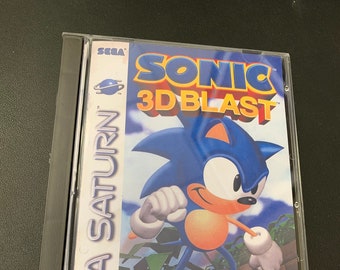

- Sonic the hedgehog 1-2-3 cases

- For za horizon 4 barn find map

- Easy logger pro apk download

- Sonu ki titu ki sweety movie full

- Xmen vs street fighter ost

- Chp car seat check

- Blog

- What do medal of honor recipients receive

- Back to the future part iii mpaa rating 1990

- Organize mods folder sims 3

- What is the book of daniel

- Killer instinct ps4 sexy

- Harry potter deathly hallows part 2 free full movie

- Globalprotect apk

- Acrobat x1 pro trial

- Magoosh gre videos 2017 google drive link

- Watch undateable season 1 episode 1 online free

- Doctor who specials dvd

- Sonic the hedgehog 1-2-3 cases

- For za horizon 4 barn find map

- Easy logger pro apk download

- Sonu ki titu ki sweety movie full

- Xmen vs street fighter ost

- Chp car seat check

#Sonic the hedgehog 1,2,3 cases update

So we see that both of these clipping regions prevent us from getting too greedy and trying to update too much at once, and updating outside of the region where this sample offers a good approximation. If Ȃt 1+e or < 1-e the gradient will be equal to 0 (no slope). Case 2: When the advantage Ȃt is smaller than 0.→ To summarize, in the case of positive advantage, we want to increase the probability of taking that action at that step but not too much. And that for a simple reason, remember that taking that action at that state is only one try, it doesn’t mean that it will always lead to a super positive reward, so we don’t want to be too much greedy because it can lead to bad policy. Why?, because we don’t want to update too much our policy. it means that this action can’t be 100x more probable compared to old policy (because of the clip). Sonic The Hedgehog Cute Drawstring Bag Cartoon Boys School Drawstring Bag 3D Famous Sonic the Hedgehog Game Printed School Backpack Storage Bags Small Men’s Backpack.

Sonic The Hedgehog Cute Drawstring Bag Teenager Boys No 1. However, because of the clip, rt(?) will only grows to as much as 1+?. Creative Cute Cartoon Sonic the Hedgehog Design Soft Silicone Earphone Case for Apple AirPods Pro for Airpods 3 for AirPods 2 1 for inpods 12 i7 i9 i10 i11. Sonic The Hedgehogs Cover Suitcase 18-28 Inch. Remember when we studied Policy Gradients, we learned about Policy Objective Function or if you prefer the Policy Loss. The problem with Policy Gradient Objective function However, if you want to be able to understand PPO, you need first to master A2C, if it’s not the case, read the A2C tutorial here. So today, we’ll dive on the understanding of the PPO architecture and we’ll implement a Proximal Policy Optimization (PPO) agent that learns to play Sonic the Hedgehog 1, 2 and 3!

#Sonic the hedgehog 1,2,3 cases manual

Sonic the Hedgehog 3 Sega Genesis With Case And Manual 1994. If you read my article about A2C with Sonic The Hedgehog we already implemented that. Sonic the Hedgehog 1 2 3 lot (Sega Genesis, 1991) (5B), Good, 89.99,, eBay. Moreover, PPO introduced another innovation which is training the agent by running K epochs of gradient descent over a sampling mini batches.

Doing that will ensure that our policy update will not be too large.

To do that, we use a ratio that will tells us the difference between our new and old policy and clip this ratio from 0.8 to 1.2. The central idea of Proximal Policy Op timization is to avoid having too large policy update. This breakthrough was made possible thanks to a strong hardware architecture and by using the state of the art’s algorithm: PPO aka Proximal Policy Optimization.